On April 6, 2026, NASA’s four-person Artemis II crew became the first humans in more than fifty years to fly past the Moon. Reid Wiseman, Victor Glover, Christina Koch, and Canadian Space Agency astronaut Jeremy Hansen spent seven hours on the lunar far side, captured thousands of photographs, and sent the first official batch back to Earth a day later. Within hours, millions of people had seen “Artemis II images” on their phones. A lot of those images never left a GPU.

The most-shared “Artemis II” photo on social media in the 72 hours after the flyby — a striking Earthrise through the Orion spacecraft’s window — was not taken by anyone in the Artemis II crew. It was generated by AI, based on William Anders’ iconic 1968 Apollo 8 Earthrise, right down to the cloud patterns. Fact-checkers at Full Fact and Lead Stories confirmed it carried Google’s SynthID watermark — the invisible marker embedded in content made with Google’s AI tools — and Hive Moderation’s detector scored it at 100% likely AI-generated.

A second viral fake, purporting to show Earth through an Orion spacecraft window, was flagged for a simpler reason: the window had five sides. The real Orion capsule windows have four. None of the AI-generated images appeared in NASA’s official gallery.

This isn’t just a space story. It’s a photography story — and it’s about a problem every photographer, editor, and visual-media reader is now living inside. AI image tools have made it trivially easy to fabricate convincing “official-looking” photography in any genre. Space imagery was the battleground this week. It won’t be the last.

What actually happened during the Artemis II flyby

NASA’s Artemis II mission lifted off on April 1, 2026. On April 6, the crew completed a seven-hour pass on the far side of the Moon, including a roughly 40-minute loss-of-signal period when the spacecraft was out of contact with Earth. The astronauts photographed Earth, the lunar surface, a 54-minute solar eclipse created by the Moon passing between them and the Sun, and six meteoroid impact flashes on the darkened lunar surface.

NASA published the official gallery the following day at nasa.gov/gallery/lunar-flyby. The images are credited, captioned, and traceable to specific cameras, astronauts, and timestamps. You can find the exact timestamp for each image, and in many cases the focal length and exposure settings. That matters — those details are part of what makes a NASA image verifiable in a way an AI render can’t be.

By the time NASA’s real gallery went live, fake Artemis II images had already been circulating for more than twelve hours.

Why the fakes fooled so many people

Three things made this round of fakes unusually effective.

1. They looked like what people already expected to see. The Apollo 8 Earthrise is one of the most reproduced photographs in human history. When an AI-generated image shows Earth hanging above the lunar limb with familiar cloud patterns, our brains don’t flag it as suspicious — they flag it as confirmation. It matches the image we already have in our heads, so it feels correct.

2. The cinematic quality was seductive. Real space photography from inside a crew capsule is often slightly imperfect: glare on the window, awkward framing, visible interior hardware, high-ISO noise, blown-out highlights where Earth’s daylit face meets the black of space. AI-generated fakes tend to be too clean, too well-composed, too cinematic. Ironically, the very things that make them look more beautiful than the real thing are the things that should tip you off.

3. Speed of sharing outpaced fact-checking. A Facebook account named “Space Voyager” posted the fake Earthrise on April 6, 2026, before NASA had released the official gallery. By the time NASA’s real images went up the next morning, the fake had been re-shared thousands of times. First-mover advantage applies to misinformation too.

How to spot fake AI space images

There’s no single magic trick, but there’s a reliable six-point checklist. Apply all six to any space image you’re about to share.

1. Source first, everything else second. If the image isn’t in NASA’s official gallery (images.nasa.gov or nasa.gov/gallery/…), a credentialed news agency’s feed, or an astronaut’s verified social account, treat it as unverified. NASA publishes thousands of high-resolution Artemis II images with full metadata. A “photo from the mission” that only exists on a meme account is a red flag, not a scoop.

2. Lighting that’s too dramatic is suspicious. Space lighting is harsh and directional. The sunlit side of the Moon is extremely bright; shadows are jet black; there’s no atmosphere to soften the contrast. AI images often add soft, cinematic glow — subtle rim lighting, atmospheric haze, gentle gradients — because those look pretty. They’re physically impossible in vacuum.

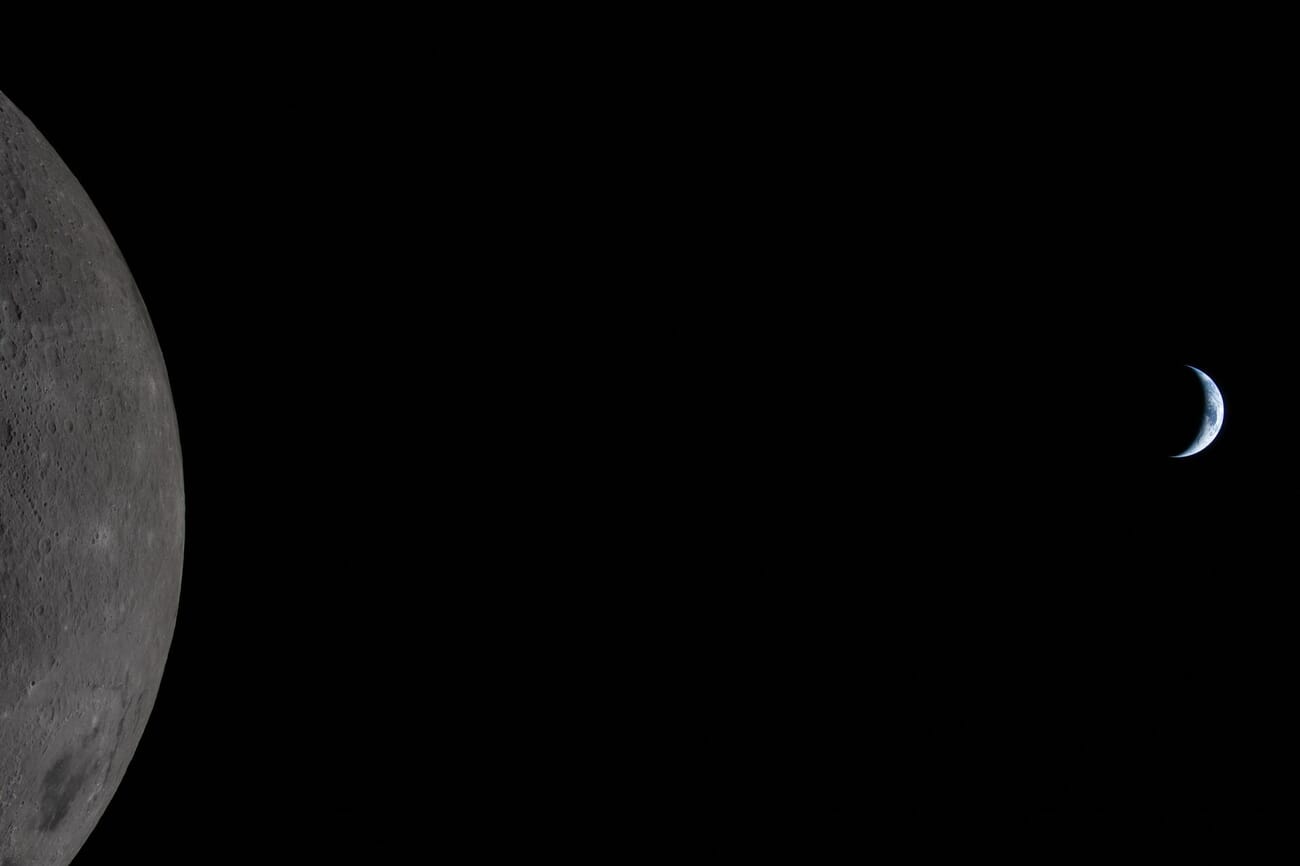

3. Scale relationships are the fastest give-away. When you look at Earth from near the Moon, Earth appears as a relatively small crescent or disc, not a massive glowing marble dominating the frame. AI images love oversized Earths because they’re more dramatic. Real NASA Earth-from-Moon images rarely have Earth occupying more than a small fraction of the frame.

4. Cinematic composition is a tell. NASA astronauts are trained photographers, but they’re also busy running a mission. Real mission photos are often framed slightly off, shot through dirty windows, and include visible spacecraft hardware. If an image looks like a Hollywood establishing shot — perfect rule-of-thirds, ideal balance, nothing cluttering the frame — ask why an astronaut would have taken a photo that looks like concept art.

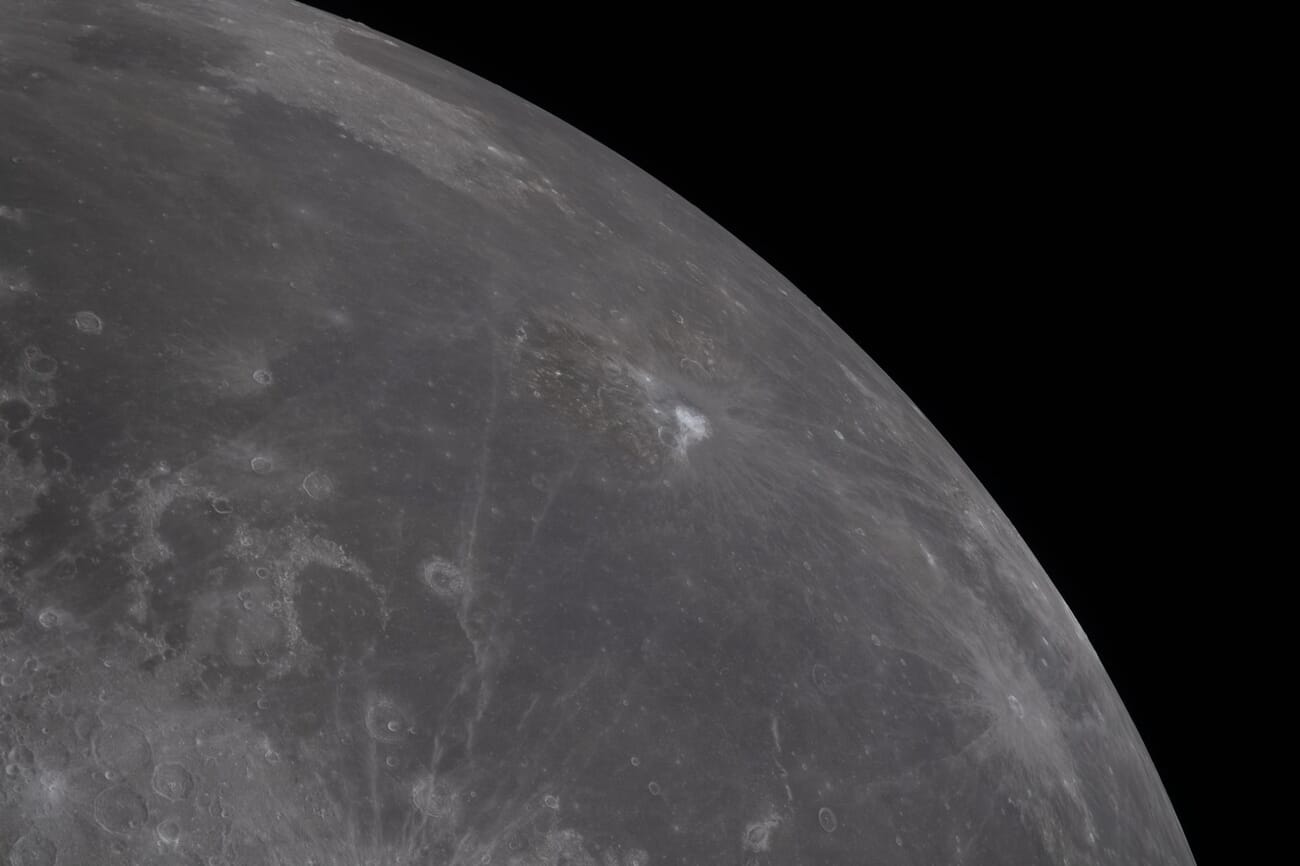

5. Texture and detail: zoom in. Lunar regolith has a specific visual texture: fine dust, sharp micro-shadows, millimeter-scale grit. AI-generated lunar surfaces tend to look painted, smoothed, or stamped — with repeating patterns if you look closely enough. Spacecraft hardware has rivets, wear, and dirt. AI renders it with unnatural perfection.

6. Check for AI watermarks. Google’s SynthID embeds an invisible marker in images generated with Google’s AI tools. It’s detectable with Google’s own services, including Gemini, and third-party tools like Hive Moderation’s AI-detection service. Both fact-checkers who flagged the viral “Artemis II” Earthrise used exactly this technique. For professionals, adding an AI-detector check to your image-verification workflow takes under a minute.

Real images vs. AI demos: a side-by-side

To make the test concrete, we generated five intentionally fake “NASA-style” Artemis II images using Nano Banana 2 (Gemini 3 Flash Image). Every one of them is believable at a glance. Every one of them breaks at least one of the rules above.

⚠️ Important: Every image below labeled “AI-generated” was created by PhotoWorkout for educational purposes. None of them are real NASA images. Do not reshare them out of context.

Example 1: Earth through a porthole

What’s wrong: The window is circular. The Orion spacecraft has four-sided trapezoidal windows. The lighting on the window frame is softly rim-lit — look at how the metallic edges glow. There’s no real light source in space that would create that gentle wraparound glow on a single porthole. Earth also fills roughly a third of the frame, which is approximately the view you’d get from low-Earth orbit, not from a trans-lunar trajectory where Earth should appear much smaller.

Example 2: The dramatic moon horizon

What’s wrong: Earth and the Milky Way are visible together in the same frame, which is effectively impossible at any realistic exposure — Earth is much too bright, the Milky Way far too dim. Either the Earth would be blown out or the Milky Way would be invisible. Earth is also too large and too high-contrast against the black sky. In real photos from lunar distance, Earth is a small, nuanced object, not a glowing marble parked in the upper third.

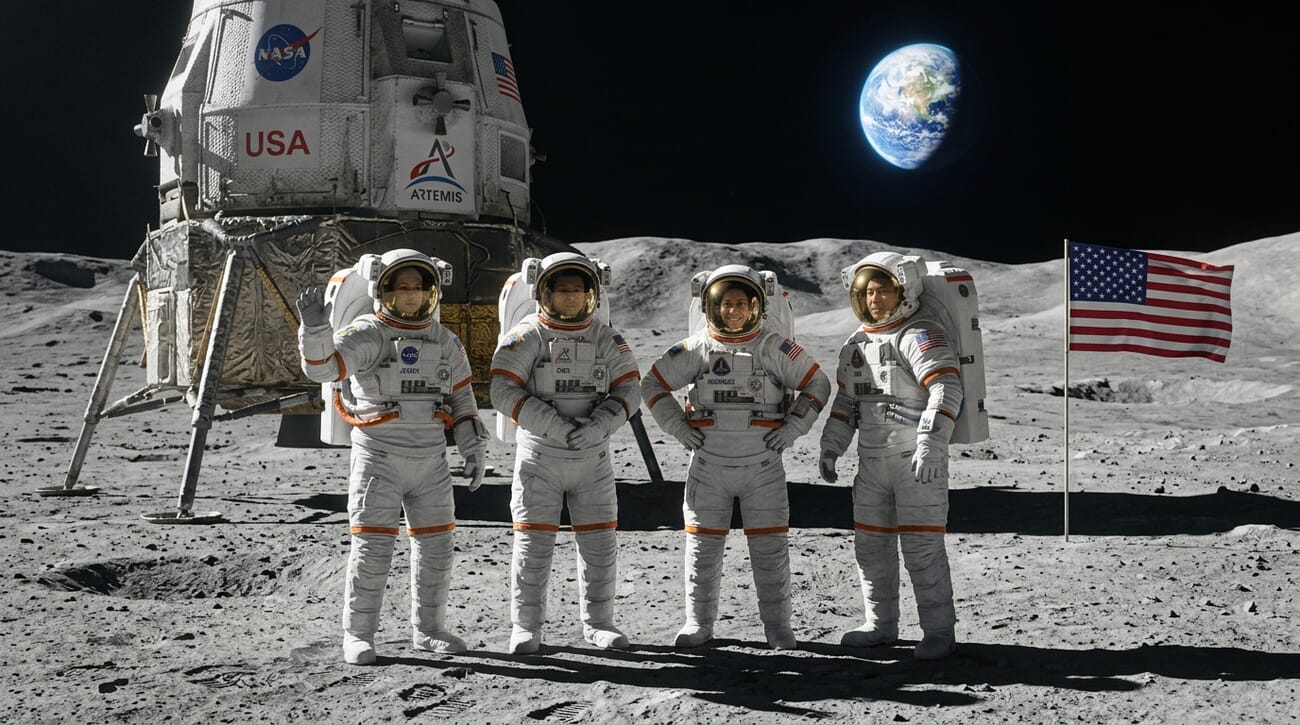

Example 3: Astronauts on the lunar surface

What’s wrong: The Artemis II crew does not land on the Moon. Artemis II is a lunar flyby mission — the crew never leaves the Orion capsule. This image is contextually impossible, regardless of how good it looks. Any image showing the Artemis II crew standing on the lunar surface is fake by definition. (A crewed landing is planned for Artemis III, currently scheduled for later in the decade.) If you’re about to share a “photo from Artemis II” of astronauts on the Moon, you already have your answer.

Example 4: View from a spacecraft window

What’s wrong: The window is a clean rectangle. Real Orion windows are four-sided and trapezoidal. The Moon is impossibly detailed, filling the frame at a scale that would only make sense if the spacecraft were pressed directly against the surface. The cinematic contrast is too heavy — crushed shadows, over-sharpened craters, and a detailed astronaut hand on a control panel, which is exactly the kind of prop AI image generators add to sell verisimilitude.

Example 5: The pristine lunar landscape

What’s wrong: Earth is too large and too vivid for this distance. The lunar foreground has the “painted” look of an AI render — rocks that feel sculpted rather than eroded, a horizon that’s suspiciously smooth, and lighting that doesn’t quite match Earth’s phase. The whole image is composed like a concept illustration, with Earth centered, a leading-line ridge, and perfect depth-of-field separation. Real lunar surface photos almost never look this polished.

What the real images actually look like

For contrast, here are three more genuine Artemis II images from NASA’s April 6 flyby. Note the harsh highlights, the dark background with almost no visible stars (because the exposure is set for the bright Earth-and-Moon scene), and the contextual details that are hard to fake: specific timestamps, identified lunar features, and cross-referenced commentary from the Artemis science team.

Each of these images is linked in the sources at the bottom of this post to its exact entry on images.nasa.gov. You can verify them directly.

The takeaway for photographers

This week’s fake-image wave is a warning about something photographers have been watching for years: the cost of a convincing visual has collapsed to zero. Anyone with API access and a plausible prompt can generate a “photograph” of an event that never happened, in a style indistinguishable from the real thing to a distracted scroller.

For photographers specifically, three things matter.

Provenance is your most valuable asset. Your name, your metadata, your publisher relationships, your camera-and-lens EXIF, your raw files — all of these are harder to fake than the image itself. Build and protect your verification chain. If you shoot for news or documentary, the world needs your provenance more than it needs your polish.

Teach your audience to look, not glance. The six-point checklist in the infographic above isn’t exotic. It’s about training your eye to pause before re-sharing. If you run a photography blog, a newsletter, or a social feed, you’re in a position to raise the visual-literacy floor for your community.

Use AI image tools — but disclose clearly. AI image generation is a legitimate tool for illustration, concept work, and educational demos (like the five fakes in this post). The standard for responsible use is unambiguous: label, disclose consistently, and never pass synthetic imagery off as documentary evidence. If you’re unsure whether you’re on the right side of the line, assume you’re not and label it.

The Artemis II crew is scheduled to splash down off the coast of San Diego today at 8:07 PM EDT. When they do, there will be real photographs — with names, times, cameras, and context. Share those. Verify the rest.

Sources used for this article:

Featured image: NASA / Artemis II, public domain. AI demo images created by PhotoWorkout using Nano Banana 2 (Gemini 3 Flash Image) for educational purposes.

Get the Weekly Photography News Digest

Join photographers who get our top stories delivered every Monday morning. No spam, unsubscribe anytime.