- Runway demonstrated a new AI video model at NVIDIA GTC that generates HD video in real time — with time-to-first-frame under 100 milliseconds.

- The model runs on NVIDIA’s next-gen Vera Rubin architecture and works more like a game engine, streaming frames continuously instead of rendering clips in batches.

- Current tools like Sora and Veo take seconds to minutes per clip — this is a fundamental shift toward live, interactive AI video.

- For photographers, this could transform behind-the-scenes content and social media workflows — but raises serious deepfake concerns.

Runway just broke one of the last major barriers in AI video. At NVIDIA’s GTC conference last week, the company demonstrated a new model that generates HD video in real time — responding to text prompts in under 100 milliseconds. That’s faster than a human blink.

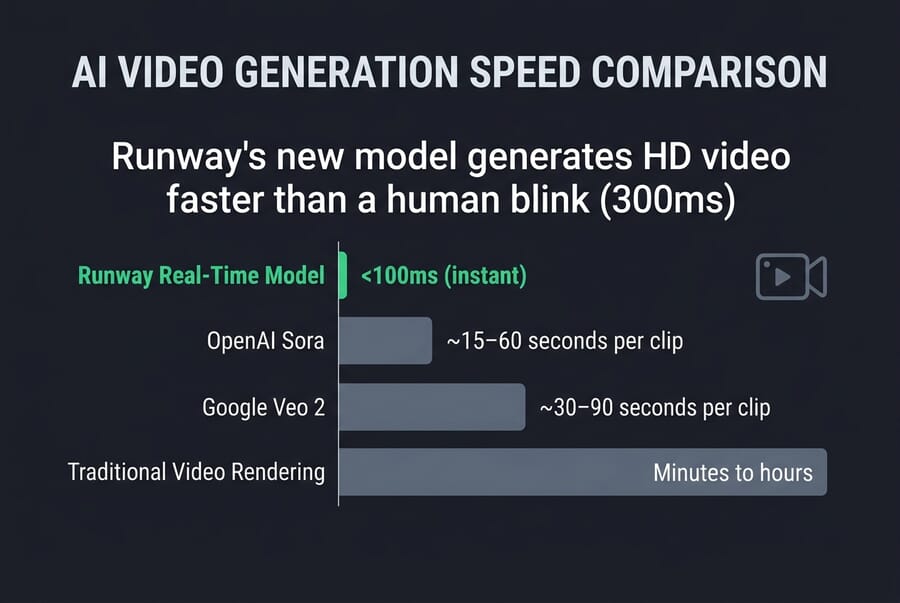

To put that in perspective: current AI video tools from OpenAI (Sora) and Google (Veo) take anywhere from 15 seconds to several minutes to produce a single clip. Runway’s new model doesn’t just speed things up — it fundamentally changes how AI video works, shifting from batch rendering to continuous frame streaming.

How Runway’s Real-Time Model Works

The as-yet-unnamed model was developed in partnership with NVIDIA and runs on the company’s next-generation Vera Rubin architecture — purpose-built hardware designed for the demands of real-time generative AI. Rather than processing an entire video clip and delivering it as a finished file, the model works more like a game engine: it streams frames continuously as they’re generated.

According to Runway’s announcement, the system achieves a time-to-first-frame under 100ms, meaning the AI avatar or scene begins responding almost instantly after a prompt is entered. The demo showed an AI-generated character reacting to prompts in real time — moving, responding, and adapting on the fly.

“GWM-1 is our first step toward models that don’t just generate pixels — they understand and simulate the world behind them,” said Cristóbal Valenzuela, Runway’s co-founder and CEO.

This builds on Runway’s GWM-1 (General World Model), the company’s first physics-aware world model family that can simulate environments for robotics training, virtual worlds, and interactive avatars. The Vera Rubin platform, with 50 petaflops of inference compute per GPU, is specifically designed to accelerate these sustained, long-context workloads.

How Fast Is It Compared to Other AI Video Tools?

The speed gap between Runway’s new model and existing tools is staggering:

- Runway Real-Time Model: Under 100ms to first frame, continuous streaming

- OpenAI Sora: 15–60 seconds per clip

- Google Veo: 30–120 seconds per clip

- Runway Gen-4.5: Several seconds per clip (currently the top-rated video generation model)

The difference isn’t just about convenience. Real-time generation opens up entirely new use cases that batch-processed video simply can’t serve — live broadcasts, interactive experiences, and responsive virtual characters.

What This Could Enable

The implications are enormous. Real-time AI video generation could power:

- Live broadcasts with AI-generated characters, backgrounds, or entire virtual worlds

- Interactive gaming where environments are generated on the fly rather than pre-built

- Virtual production for film and TV — real-time AI backdrops replacing LED walls

- Social media content that’s generated and customized in real time for each viewer

Google’s Project Genie, announced in January, hinted at this direction with its world-building tool that creates explorable environments from single photos. But Runway’s demo takes it a step further by showing video generation that’s fast enough for live interaction.

What This Means for Photographers

If you’re a photographer who’s been watching AI video from the sidelines, this is the development that should get your attention. Here’s why:

Behind-the-scenes content gets instant. Imagine generating real-time AI video walkthroughs of a shoot concept for a client — showing them the mood, setting, and flow before you’ve even picked up a camera. Tools like this could replace mood boards entirely.

Social media workflows could transform. Instead of spending hours editing video content for Reels or TikTok, photographers could generate supporting video clips in real time to complement their photo work. The barrier between still photography and video content keeps shrinking.

Virtual staging and product visualization. Real estate and product photographers could use real-time AI video to show spaces or products in motion — rotating views, lighting changes, or staged environments generated instantly during a client call.

If you want to understand the broader trajectory of how AI is reshaping photography workflows and jobs, we’ve covered the full landscape in depth.

The Deepfake Problem Just Got Harder

There’s no sugarcoating it: real-time AI video generation makes the deepfake problem significantly worse. Current detection tools struggle with pre-rendered AI video that takes minutes to create. A model that generates convincing video in real time — fast enough for live video calls — could enable scams and impersonation at a scale we haven’t seen before.

The technology still has limitations. Interesting Engineering notes that maintaining character consistency remains a challenge — AI-generated faces tend to drift and change the more the model iterates. But these are engineering problems that get solved with each generation.

Sony is already working on solutions from the camera side. The company’s Camera Verify system uses autofocus depth data to detect AI deepfakes in video, essentially using real-world 3D information that AI generators can’t replicate. As AI video gets faster and more convincing, authentication at the point of capture becomes even more critical.

Meanwhile, organizations are racing to create universal ‘AI-free’ certification logos that could help audiences distinguish human-made content from AI-generated material — though real-time generation makes that distinction harder to enforce.

What’s Next

The model is still a research preview — there’s no public release date or pricing yet. But the fact that Runway’s existing Gen-4.5 model was ported to the Vera Rubin platform in a single day suggests the infrastructure for commercial deployment is already in place.

NVIDIA’s Richard Kerris called video generation and world models “a new era of AI — one that understands and simulates the physical world.” With NVIDIA’s resources behind it, expect rapid iteration. The gap between “impressive demo” and “tool you can use” is shrinking fast.

For photographers and content creators keeping track of how fast AI is moving, the latest AI photography statistics paint a clear picture of an industry in rapid transformation.

Frequently Asked Questions

How fast is Runway’s new real-time AI video model?

The model achieves time-to-first-frame under 100 milliseconds — faster than a human blink. It streams HD video frames continuously rather than rendering complete clips, making it fundamentally different from tools like Sora or Veo that take seconds to minutes per clip.

What hardware does the model run on?

It runs on NVIDIA’s next-generation Vera Rubin architecture, which provides 50 petaflops of inference compute per GPU. This platform was specifically designed for sustained, long-context AI workloads like real-time video generation.

Can I use this model right now?

Not yet. The model was shown as a research preview at NVIDIA GTC in March 2026. There’s no public release date or pricing announced. Runway’s current publicly available model is Gen-4.5.

Will this make deepfakes easier to create?

Yes, real-time AI video generation raises serious concerns about deepfakes. A model fast enough for live video calls could enable real-time impersonation. However, the technology still struggles with character consistency, and companies like Sony are developing camera-level authentication to combat AI-generated fakes.

Sources used for this article:

Featured image: AI-generated illustration.

Get the Weekly Photography News Digest

Join photographers who get our top stories delivered every Monday morning. No spam, unsubscribe anytime.