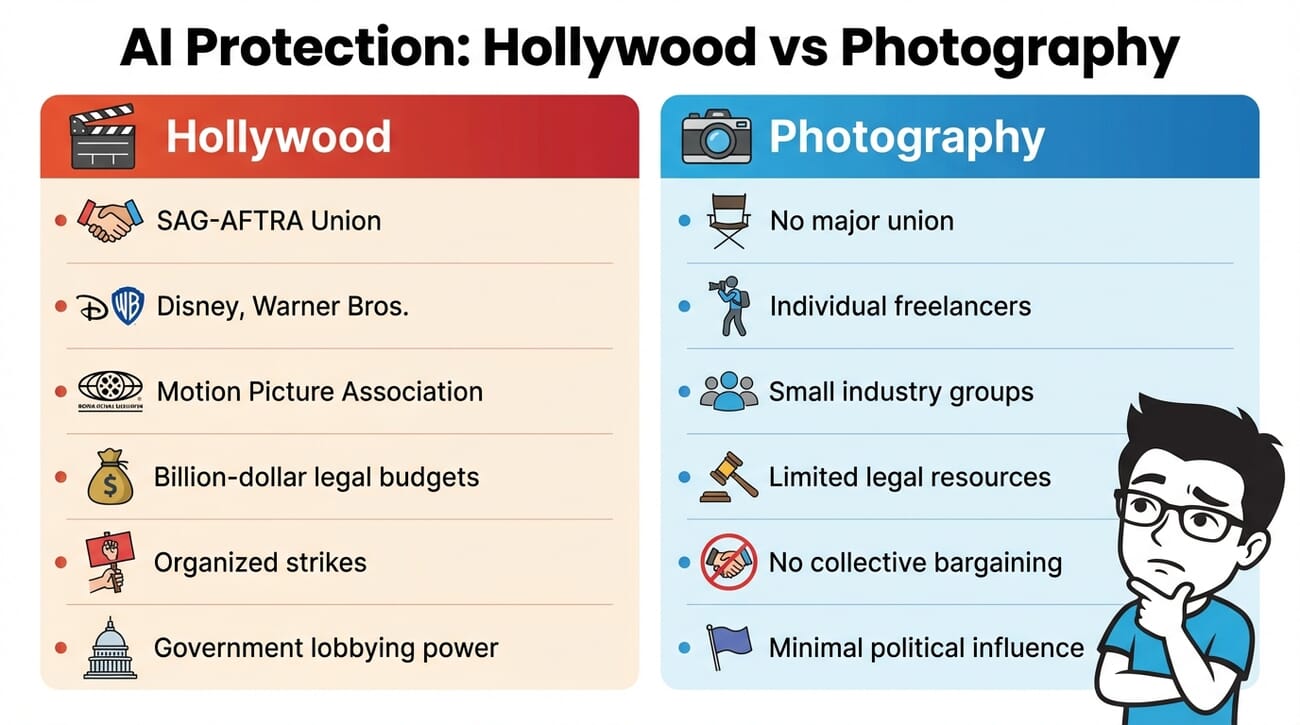

- Hollywood has SAG-AFTRA, Disney, and the MPA fighting AI — photography has no equivalent institutional protection.

- ByteDance’s Seedance 2.0 sparked cease-and-desist letters from Disney — the same crisis photographers faced a year ago with Midjourney.

- Whatever legal precedents Hollywood sets will directly impact photographer rights on training data and likeness protection.

- Photographers can act now: register copyrights, embed metadata, use C2PA, and opt out of AI training.

SAG-AFTRA. Disney. Warner Bros. The Motion Picture Association. These are just some of the heavyweight organizations currently fighting back against AI-generated content that imitates Hollywood’s work.

For photographers, the reaction is simple: welcome to the party.

The photography industry has been dealing with AI imitation for over a year now — ever since tools like Midjourney and Stable Diffusion made it trivially easy to generate images “in the style of” living photographers. But unlike Hollywood, photography doesn’t have billion-dollar studios or powerful labor unions backing the fight.

Here’s what’s happening, why it matters for photographers, and what you can actually do about it.

The Seedance Moment: Hollywood’s Wake-Up Call

In February 2026, ByteDance (TikTok’s parent company) released Seedance 2.0 — an AI video generator so capable that users immediately started creating viral clips featuring Hollywood IP. A video of Brad Pitt and Tom Cruise fighting each other spread across the internet within days.

Disney’s response was swift: a cease-and-desist letter sent directly to ByteDance’s global general counsel. The Motion Picture Association called it “blatant infringement” and demanded ByteDance “immediately cease its infringing activity.”

ByteDance responded by telling the BBC it “respects intellectual property rights” and would strengthen safeguards. Deadpool writer Rhett Reese summed up the industry’s anxiety: “In next to no time, one person is going to be able to sit at a computer and create a movie indistinguishable from what Hollywood now releases.”

Sound familiar? Photographers have been saying the same thing about still images since 2023.

Photography Has No Army

Here’s the uncomfortable truth: photography, as an industry, simply doesn’t have the institutional power that Hollywood does.

Consider the difference:

- Hollywood has SAG-AFTRA — a labor union representing virtually every major actor, with the power to organize strikes and negotiate industry-wide protections.

- Hollywood has the MPA — the Motion Picture Association, which lobbies governments worldwide and files lawsuits on behalf of studios.

- Hollywood has Disney, Warner Bros., and NBCUniversal — companies with billions in revenue and armies of lawyers ready to fight.

Photography has… individual photographers. Mostly freelancers. No union with real teeth. No equivalent to the MPA. The closest thing to a Disney in the photography world might be Instagram — and as PetaPixel’s analysis points out, “they dumped us years ago.”

Photography is an individualized industry, more similar to music than filmmaking. But even musicians have major labels fighting for their interests. Photographers are largely on their own.

The Problem We Already Know

While Hollywood is just waking up to AI threats, photographers have been living with them. Consider what’s already happened:

- Midjourney revealed that users routinely prompt images “in the style of” living, active photographers — and the company did nothing to stop it.

- AI image generators were trained on billions of copyrighted photographs scraped from the internet, largely without consent or compensation.

- Stock photography revenue has cratered as businesses replace licensed images with AI-generated alternatives.

- No AI company has voluntarily strengthened safeguards to protect photographers — unlike ByteDance’s (admittedly reluctant) response to Hollywood pressure.

The difference isn’t the severity of the problem — it’s the power to respond. When Disney sends a cease-and-desist, companies listen. When an individual photographer complains, the response is silence.

How Hollywood’s Fight Could Help Photographers

Despite photography’s weaker position, there’s a silver lining: whatever legal precedents Hollywood establishes will likely benefit photographers too.

Here’s what could change:

- Training data compensation. If courts rule that AI companies must pay for using copyrighted films as training data, the same principle would apply to photographs.

- Likeness and style protection. If actors win protections against AI-generated deepfakes and impersonations, photographers could use similar arguments to protect their distinctive styles.

- Opt-out requirements. Industry-wide standards for opting out of AI training — something Hollywood has the leverage to demand — would benefit all creative professionals.

- International enforcement. Hollywood’s ability to pressure Chinese AI companies (like ByteDance and MiniMax) establishes enforcement mechanisms that individual photographers could never achieve alone.

Disney, Warner Bros., and NBCUniversal have already filed an infringement lawsuit against MiniMax, another Chinese AI firm. As copyright lawyer Aaron Moss notes, serving legal complaints to Chinese companies under the Hague Convention can take up to two years just to reach the starting line. Only organizations with deep pockets and long time horizons can sustain that kind of legal battle.

The Geopolitics Angle

There’s another layer to this story that photographers should watch: the U.S.-China AI competition directly affects which companies — and which rules — will shape the future.

The irony isn’t lost on observers that Disney is simultaneously:

- Fighting ByteDance over unauthorized use of its IP in Seedance

- Partnering with OpenAI, allowing it to train on Disney IP for Sora, OpenAI’s own AI video generator (which has since been shut down, collapsing the Disney deal)

Which AI platform wins dominance will determine whether creative professionals have any meaningful protections. A U.S.-based company operating under U.S. copyright law is at least theoretically subject to legal action. A Chinese company operating outside U.S. jurisdiction is a much harder target.

For photographers, this means the outcome isn’t just about art — it’s about whether the legal frameworks that protect creative work will have any teeth in a global AI market.

What Photographers Can Do Right Now

You can’t wait for Hollywood to save you. Here are practical steps to protect your work today:

1. Register Your Copyrights

In the U.S., you can’t file an infringement lawsuit without a registered copyright. The Copyright Office allows batch registration of photographs — it costs $65 for a group of up to 750 published photos. This is your minimum viable protection. (The Supreme Court recently declined to hear an AI copyright case, reinforcing that human authorship remains the bedrock of copyright law.)

2. Embed Comprehensive Metadata

Always include IPTC/XMP metadata in your files: creator name, copyright notice, contact information, and usage terms. Tools like Photoshop, Lightroom, and Photo Mechanic make this easy. While metadata can be stripped, it establishes a clear chain of ownership.

3. Use C2PA Content Credentials

The Coalition for Content Provenance and Authenticity (C2PA) standard embeds tamper-evident provenance data into your images. Adobe, Nikon, Leica, and Sony all support it. This doesn’t prevent AI from using your images, but it creates a verifiable record of origin that could matter in future legal proceedings.

4. Opt Out of AI Training

Several platforms now offer opt-out mechanisms:

- Spawning.ai — maintains a registry for artists who want to opt out of AI training datasets

- DeviantArt — allows creators to tag work as “not for AI training”

- Adobe Firefly — only trained on licensed content and Adobe Stock

Are these perfect? No. But they create documented evidence of your intent — which matters if compensation frameworks emerge from Hollywood’s legal battles.

5. Support Industry Organizations

Organizations like the American Society of Media Photographers (ASMP), Professional Photographers of America (PPA), and the Copyright Alliance are the closest things photography has to institutional advocates. They may not have Disney’s budget, but collective membership strengthens their lobbying power.

6. Document Everything

If you find your work being used to generate AI content, document it. Screenshot the prompts, the outputs, and the platforms. If you sell stock photography, track where your images appear in training datasets. This evidence may become valuable as legal frameworks develop.

The Audience Factor

There’s one more variable that could determine the outcome: public taste.

Will audiences actually embrace AI-generated content? The early signs are mixed. AI-generated images flood social media, but there’s growing backlash against “AI slop.” Filmmaker Darren Aronofsky’s AI-generated series on the American Revolution drew more controversy than acclaim.

If audiences ultimately reject AI imitations in favor of authentic human creativity — in both film and photography — market forces may accomplish what legal frameworks can’t.

But betting on public taste alone is a risky strategy. Which is why those legal precedents from Hollywood matter so much.

Looking Ahead

Photography is, as PetaPixel bluntly put it, “a courtier serving in the palace of a tyrant” — waiting for the shifting sands to settle. The industry doesn’t control its own fate in this fight.

But that doesn’t mean photographers are powerless. Register your copyrights. Embed your metadata. Support your industry organizations. And pay attention to what Hollywood achieves — because those victories, when they come, will be your victories too.

The dream scenario: Hollywood forces AI companies to compensate creators for training data, to obtain permission before imitating someone’s work, and to build robust opt-out systems. That precedent would reshape the entire creative economy — photography included.

The nightmare: AI companies relocate to jurisdictions beyond legal reach, and creative professionals of all kinds are left without recourse.

The most likely outcome? Something in between. And photographers need to be ready for it.

Can photographers sue AI companies for using their images as training data?

It depends on jurisdiction. In the U.S., several class-action lawsuits are pending against AI companies (including Stability AI and Midjourney), but no definitive ruling has been made yet. Registering your copyright is essential — without it, you can’t file a lawsuit in the U.S.

Does C2PA content credentials prevent AI from using my photos?

No. C2PA embeds provenance metadata into your images to prove origin and authenticity, but it doesn’t technically prevent AI tools from scraping or training on them. However, it creates a verifiable ownership record that could be important evidence in future legal proceedings.

Is there a photography equivalent of SAG-AFTRA?

Not with the same power. The closest organizations are the American Society of Media Photographers (ASMP) and Professional Photographers of America (PPA). They advocate for photographer rights, but they lack the leverage of a labor union backed by major studios.

How will Hollywood’s AI fight affect stock photographers?

If Hollywood establishes that AI companies must compensate creators for training data, stock photographers could benefit from the same precedent. It could also lead to licensing frameworks where photographers are paid when their images contribute to AI model training.

Should I watermark all my online images to prevent AI training?

Watermarks can be removed by AI, so they’re not a reliable protection. A better approach is embedding IPTC metadata, registering copyrights, using C2PA credentials, and opting out of AI training through platforms like Spawning.ai. These create documented evidence of ownership and intent.

Featured image: Photo by Meg von Haartman on Unsplash.

Related Articles

Get the Weekly Photography News Digest

Join photographers who get our top stories delivered every Monday morning. No spam, unsubscribe anytime.